Where there is new technology, there are cybercriminals ready to wield it with ill intent.

We’re hearing a lot these days about Artificial Intelligence and the threat it may or may not pose to our industries and our individual job functions. What we’re seeing less about is the impact AI will imminently have on cybercrime and how that will affect our businesses over the coming years.

Since we at Optimal Networks are always working to keep our clients ahead of the curve, we’ve put this article together to explore how the cybercrime industry has used digital impersonation tactics to their advantage, and how this might evolve over the next few years.

We use the term “industry” there deliberately; cybercrime functions as a full business operation with dedicated departments, not some guy in his mother’s basement with time to spare. Cybercrime brings in an annal revenue three times that of Walmart and claims an overall industry value of $1.5 trillion. As we’ve written before, we should never underestimate the ingenuity of these organized professionals.

We say this not to scare you, but to set proper context for what we’re about to explore next: how today, in the wake of AI going mainstream, we’re on the brink of some mind-bending innovation in scam tactics.

EXPLOITING TRUST THROUGH IMPERSONATION

While some victims are still duped into so-called Nigerian prince scams (yes, still), a relatively small portion of the population is susceptible to this sort of fraud. But just about everyone can be tricked into giving a malicious actor (MA) what they want if that MA successfully impersonates a person or entity we trust.

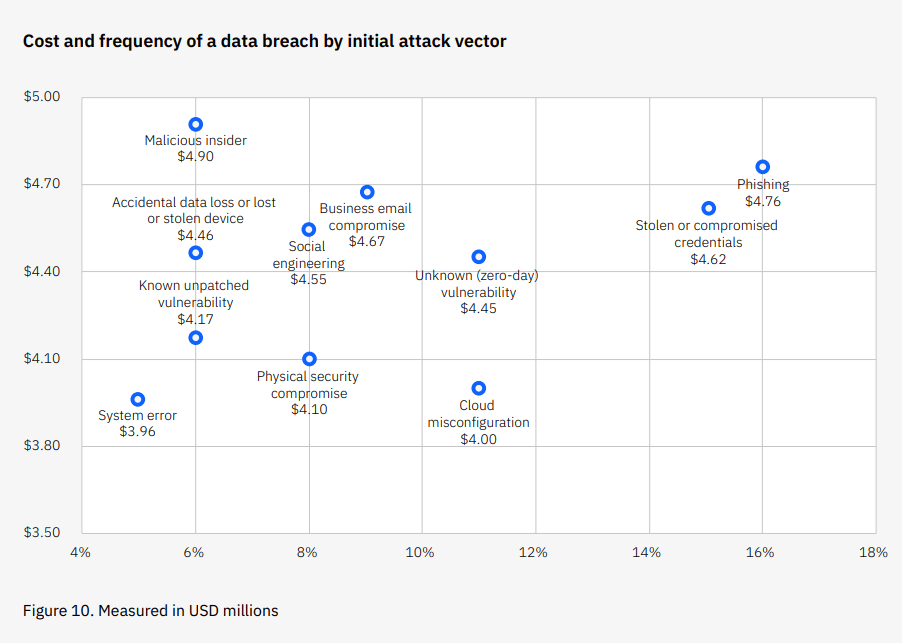

This is the concept behind phishing, which is the most common and second costliest initial vector for data breaches.

Phishing is particularly effective when paired with a tactic called spoofing where MAs create emails that, at a quick glance, appear to come directly and legitimately from the source they’re impersonating—be it your bank or your boss. Throw enough urgency into the mix to cloud our judgment and scammers have themselves a win.

A RECENT PIVOT: SMISHING AND VISHING

There are no signs of email phishing falling by the wayside, but cybercriminals have not been blind to a couple factors affecting their “market” over the past several years:

- The ubiquity of mobile devices and increasing daily screentime.

- A rise in corporate security awareness training that emphasizes email phishing.

Fortunately many of us are getting quite good at identifying and avoiding phishing emails. But we don’t often approach text messages or phone calls with the same caution. And we’re on our phones a lot.

These conditions have given rise to text messages from “your CEO” (an MA, smishing) and proactive service calls from “Microsoft” (also an MA, vishing).

We’ve taken a step forward, but the cybercriminals have taken two.

ARTIFICIAL INTELLIGENCE, DEEPFAKES, AND VOICE CLONING AS EMERGING TRENDS

2023 is proving to be the year of Artificial Intelligence. From the absolute explosion of ChatGPT (100 million users within 2 months) to the launch of AI tool after AI tool, this advanced technology has made its mark on the general public and in the corporate realm.

Just try to write up a LinkedIn post today and you’ll be prompted to use AI. We’ll see this sort of embedded AI in 40% of enterprise applications by 2024, Gartner predicts.

There are a ton of practical applications for this technology that have the potential to improve operational efficiency in our businesses. And for years cybersecurity defenses using AI have been the most effective at minimizing the costs incurred from a successful breach ($1.76MM in costs avoided per breach on average). So we won’t be ones to say AI is all bad. But give this conversation a listen:

This tool, which is still in beta testing, can hold 5- to 40-minute conversations and take autonomous action as though it were a human. The ability to scale is almost unlimited. Can you think of a few [thousand] ways this could be used for nefarious purposes in a business context?

In addition to this ability to impersonate an anonymous but corporeal human, AI also opens the door to imitate specific individuals over audio and video.

- Deepfake videos – Making someone say and do things someone else did by embedding their likeness into video footage. A well-known example: this manufactured video of Jennifer Lawrence/Steve Buscemi.

- Deepfake audio/voice cloning – Making someone say things they did not by manipulating audio made to sound like their voice. A well-known example: this manufactured video of Mark Zuckerberg.

By the time we’re trending toward side-stepping smishing and vishing scams will we be faced with phone calls from our boss’s phone number, using our boss’s voice, making an urgent plea for a wire transfer?

WHAT TO EXPECT IN THE MID-SIZED BUSINESS SPACE

The short answer is “no, we don’t think so.”

We don’t expect voice cloning to be a focus at this stage – after spending time testing both free and paid services to mimic our CEO’s voice, the results were of quite poor quality.

Deepfakes will also be a rarity for now, as most individuals of influence in the SMB space don’t have enough of a digital presence to feed into AI systems; there isn’t enough data for AI to learn from and create a convincing replica.

So what is it we actually need to prepare ourselves, our teams, and our businesses for on the cybersecurity front? We very much expect to see is an elevation of existing tactics: more scalable, more frequent, and more convincing attacks.

- Tools like WormGPT will make phishing nearly effortless.

- Tools like Air AI (above) will make vishing just as easy.

- AI-powered email and voice scams combined will make for incredibly convincing attacks.

This brings us back to the old refrain: SECURITY AWARENESS TRAINING IS KEY. Your team needs to know:

- The technology and types of scams that are out there.

- How exactly they should deal with suspicious emails, texts, or phone calls (including hanging up and calling known parties back from a verified number).

- What sort of activity is and is not permitted on company devices.

Mandatory, in-depth, example-heavy, and consistent training is imperative to minimize your chances of losing an average of $4.45MM to a breach.

When was your last company-wide session?

NEED HELP?

If you’d rather not have to worry about cybersecurity and technology trends on your own, we’d love to discuss how our proactive IT services or strategic consulting services (including security audits and policy writing) could help.

If you want a quick, free evaluation of how your overall IT strategy aligns with best practices, take our self-assessment for law firms or for associations and get your IT Maturity Score in 6 minutes or less.